DenseNet是指Densely connected convolutional networks(密集卷积网络)。它的优点主要包括有效缓解梯度消失、特征传递更加有效、计算量更小、参数量更小、性能比ResNet更好。它的缺点主要是较大的内存占用。

DenseNet网络与Resnet、GoogleNet类似,都是为了解决深层网络梯度消失问题的网络。

Resnet从深度方向出发,通过建立前面层与后面层之间的“短路连接”或“捷径”,从而能训练出更深的CNN网络。

GoogleNet从宽度方向出发,通过Inception(利用不同大小的卷积核实现不同尺度的感知,最后进行融合来得到图像更好的表征)。

DenseNet从特征入手,通过对前面所有层与后面层的密集连接,来极致利用训练过程中的所有特征,进而达到更好的效果和减少参数。

DenseNet网络

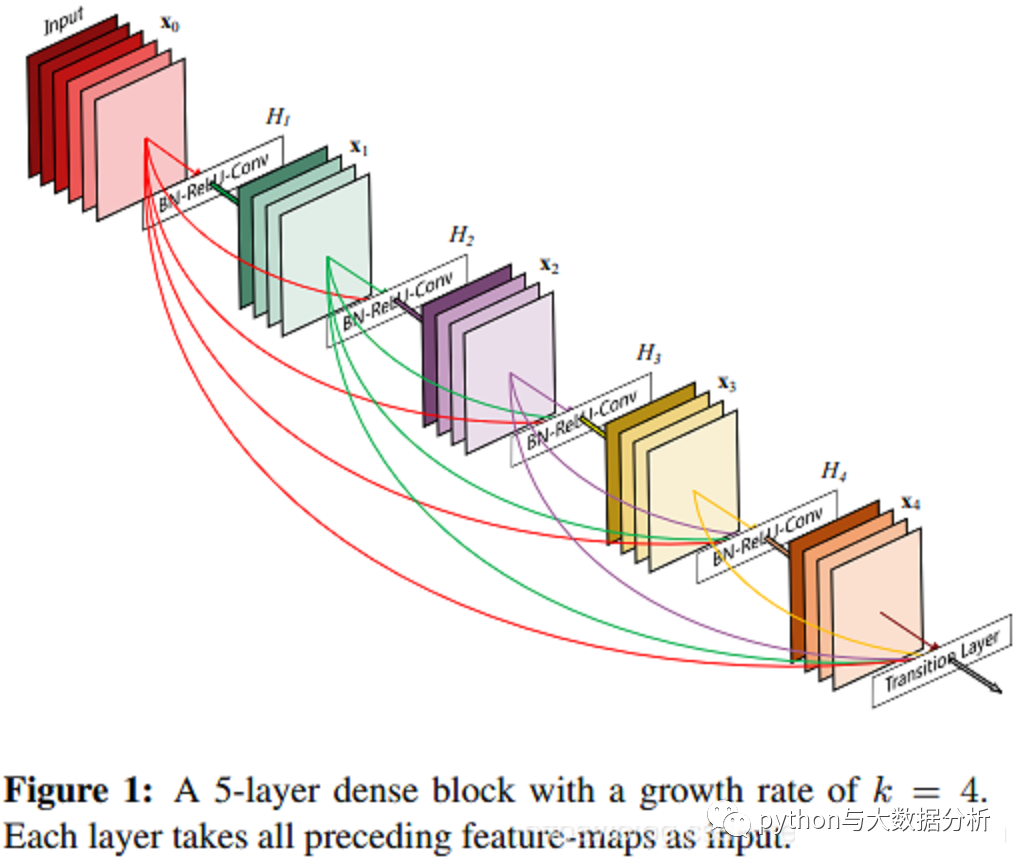

Dense Block:像GoogLeNet网络由Inception模块组成、ResNet网络由残差块(Residual Building Block)组成一样,DenseNet网络由Dense Block组成,论文截图如下所示:每个层从前面的所有层获得额外的输入,并将自己的特征映射传递到后续的所有层,使用级联(Concatenation)方式,每一层都在接受来自前几层的”集体知识(collective knowledge)”。增长率(growth rate)k是每个层的额外通道数。

其实说了那么多我也不大明白原理和数学推理,只需要按照相关代码做就行了

class Bottleneck(nn.Module):

def __init__(self, input_channel, growth_rate):

super(Bottleneck, self).__init__()

self.bn1 = nn.BatchNorm2d(input_channel)

self.relu1 = nn.ReLU(inplace=True)

self.conv1 = nn.Conv2d(input_channel, 4 * growth_rate, kernel_size=1)

self.bn2 = nn.BatchNorm2d(4 * growth_rate)

self.relu2 = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(4 * growth_rate, growth_rate, kernel_size=3, padding=1)

def forward(self, x):

out = self.conv1(self.relu1(self.bn1(x)))

out = self.conv2(self.relu2(self.bn2(out)))

out = torch.cat([out, x], 1)

return out

class Transition(nn.Module):

def __init__(self, input_channels, out_channels):

super(Transition, self).__init__()

self.bn = nn.BatchNorm2d(input_channels)

self.relu = nn.ReLU(inplace=True)

self.conv = nn.Conv2d(input_channels, out_channels, kernel_size=1)

def forward(self, x):

out = self.conv(self.relu(self.bn(x)))

out = F.avg_pool2d(out, 2)

return out

class DenseNet(nn.Module):

def __init__(self, nblocks, growth_rate, reduction, num_classes):

super(DenseNet, self).__init__()

self.growth_rate = growth_rate

num_planes = 2 * growth_rate

self.basic_conv = nn.Sequential(

nn.Conv2d(3, 2 * growth_rate, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(2 * growth_rate),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

)

self.dense1 = self._make_dense_layers(num_planes, nblocks[0])

num_planes += nblocks[0] * growth_rate

out_planes = int(math.floor(num_planes * reduction))

self.trans1 = Transition(num_planes, out_planes)

num_planes = out_planes

self.dense2 = self._make_dense_layers(num_planes, nblocks[1])

num_planes += nblocks[1] * growth_rate

out_planes = int(math.floor(num_planes * reduction))

self.trans2 = Transition(num_planes, out_planes)

num_planes = out_planes

self.dense3 = self._make_dense_layers(num_planes, nblocks[2])

num_planes += nblocks[2] * growth_rate

out_planes = int(math.floor(num_planes * reduction))

self.trans3 = Transition(num_planes, out_planes)

num_planes = out_planes

self.dense4 = self._make_dense_layers(num_planes, nblocks[3])

num_planes += nblocks[3] * growth_rate

self.AdaptiveAvgPool2d = nn.AdaptiveAvgPool2d(1)

# 全连接层

self.fc = nn.Sequential(

nn.Linear(num_planes, 256),

nn.ReLU(inplace=True),

# 使一半的神经元不起作用,防止参数量过大导致过拟合

nn.Dropout(0.5),

nn.Linear(256, 128),

nn.ReLU(inplace=True),

nn.Dropout(0.5),

nn.Linear(128, 10)

)

def _make_dense_layers(self, in_planes, nblock):

layers = []

for i in range(nblock):

layers.append(Bottleneck(in_planes, self.growth_rate))

in_planes += self.growth_rate

return nn.Sequential(*layers)

def forward(self, x):

out = self.basic_conv(x)

out = self.trans1(self.dense1(out))

out = self.trans2(self.dense2(out))

out = self.trans3(self.dense3(out))

out = self.dense4(out)

out = self.AdaptiveAvgPool2d(out)

out = out.view(out.size(0), -1)

out = self.fc(out)

return out

def DenseNet121():

return DenseNet([6, 12, 24, 16], growth_rate=32, reduction=0.5, num_classes=10)

def DenseNet169():

return DenseNet([6, 12, 32, 32], growth_rate=32, reduction=0.5, num_classes=10)

def DenseNet201():

return DenseNet([6, 12, 48, 32], growth_rate=32, reduction=0.5, num_classes=10)

def DenseNet265():

return DenseNet([6, 12, 64, 48], growth_rate=32, reduction=0.5, num_classes=10)

# 初始化模型

from torchstat import stat

# 定义模型输出模式,GPU和CPU均可

model = DenseNet121().to(DEVICE)

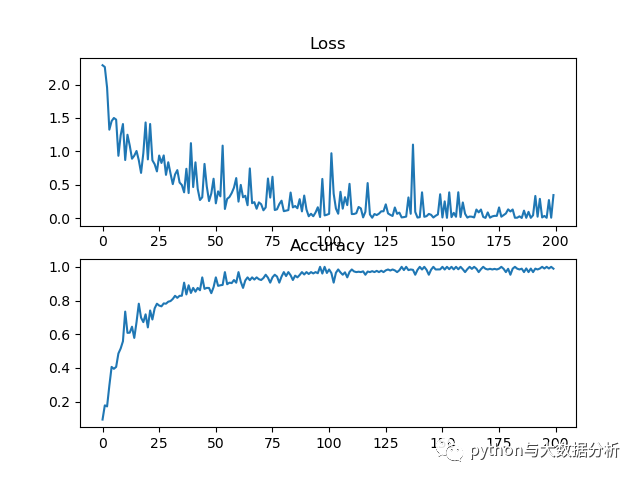

在NVIDIA GeForce GTX 1660 SUPER显卡上训练了100轮,大致上一轮1分钟,这是DenseNet网络训练的损失率和准确率,在验证集也是保持80%的准确率。

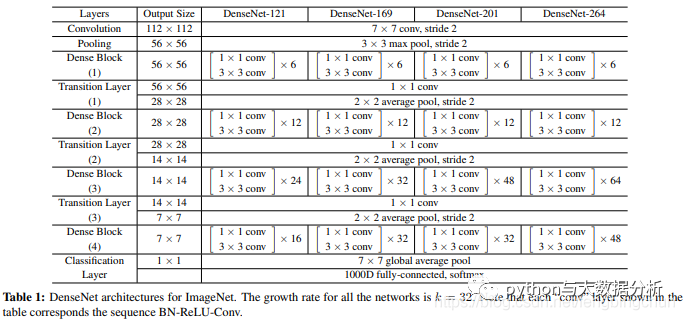

DenseNet也是一个系列,包括DenseNet-121、DenseNet-169等等,论文中给出了4种层数的DenseNet,论文截图如下所示:所有网络的增长率k是32,表示每个Dense Block中每层输出的feature map个数。

关于图像分类的模型算法,热情也没了,到此也就告一段落了,后续再讨论一些新的话题。

最后欢迎关注公众号:python与大数据分析