原标题:Hypergraph convolution and hypergraph attention

作者:Song Bai , Feihu Zhang, Philip H.S. Torr

关键词:超图,图卷积网络,注意力机制

中文摘要:

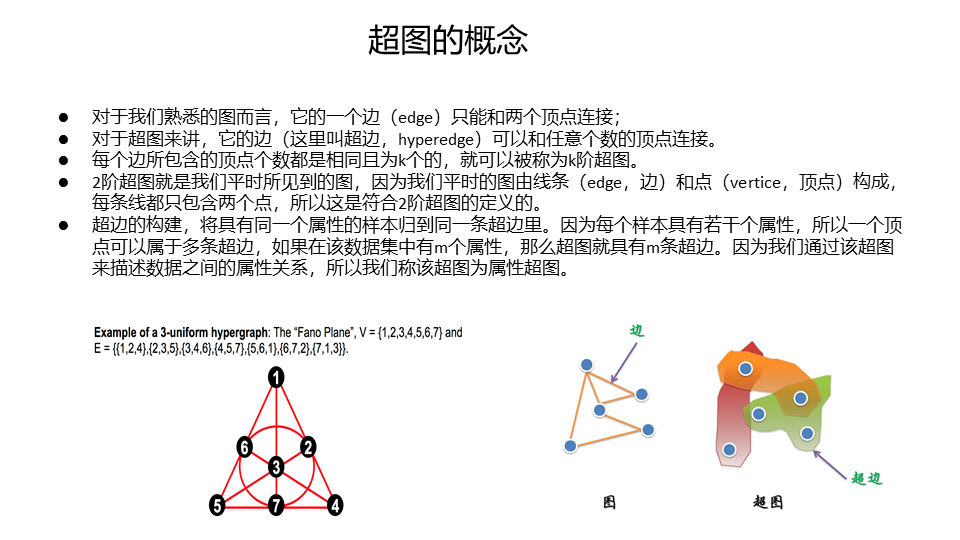

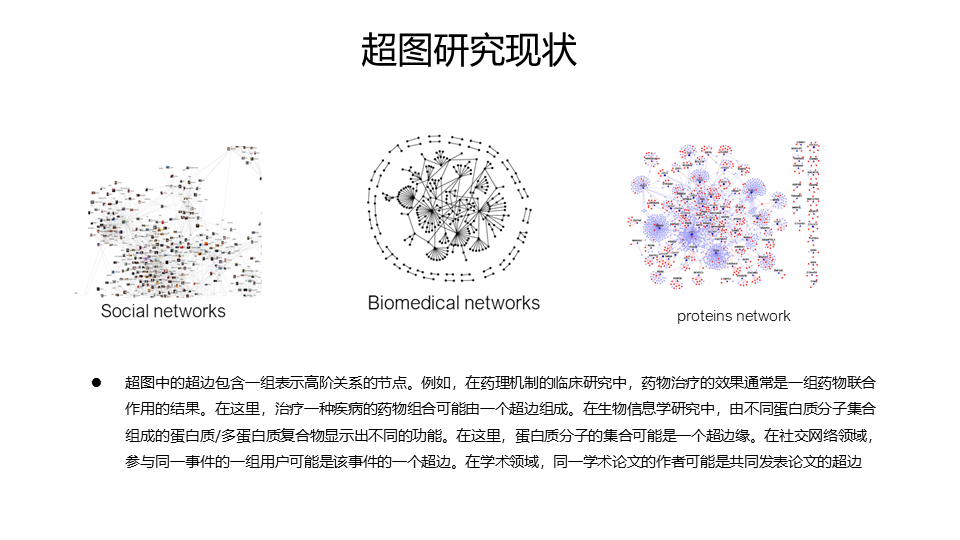

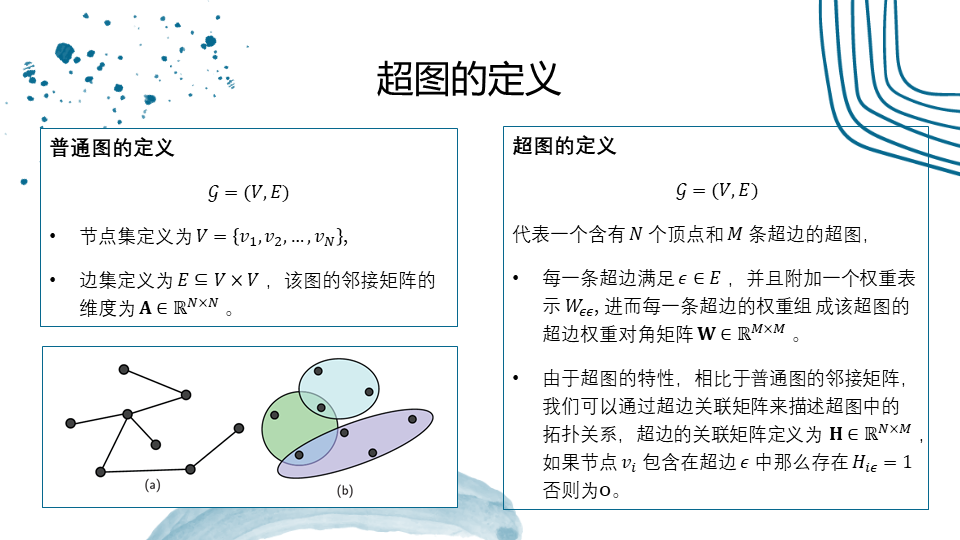

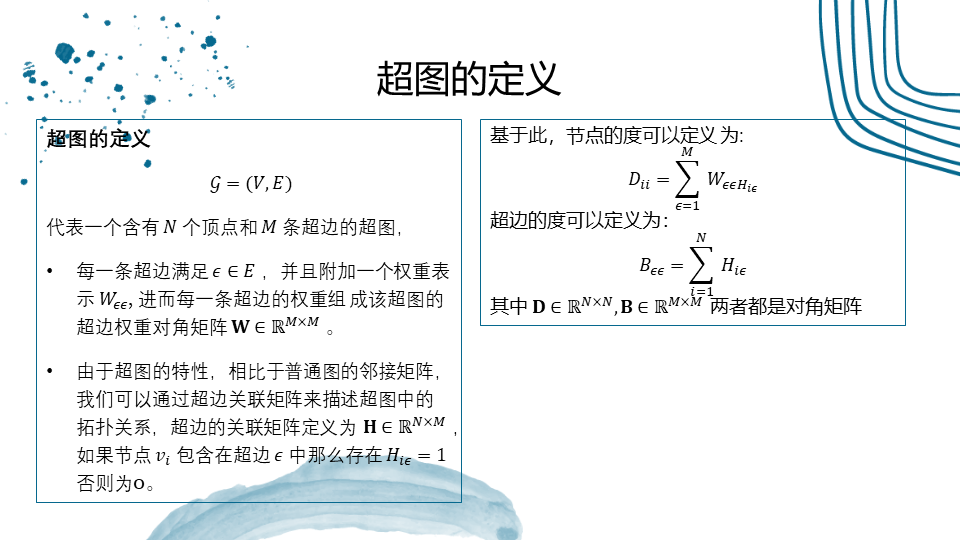

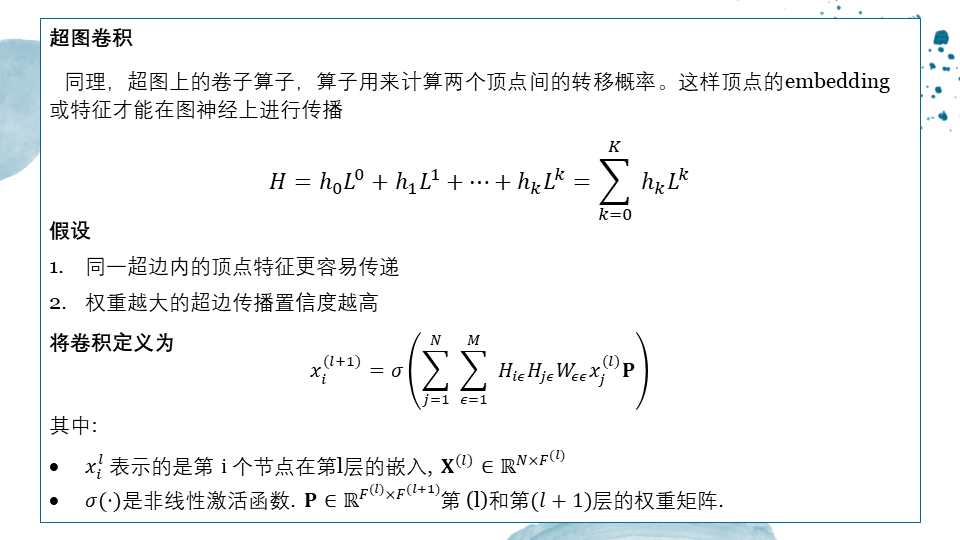

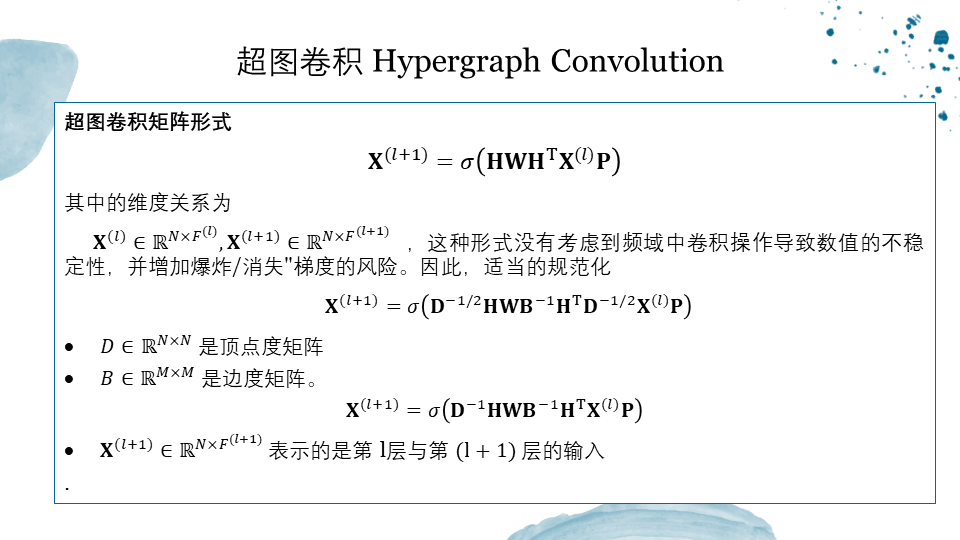

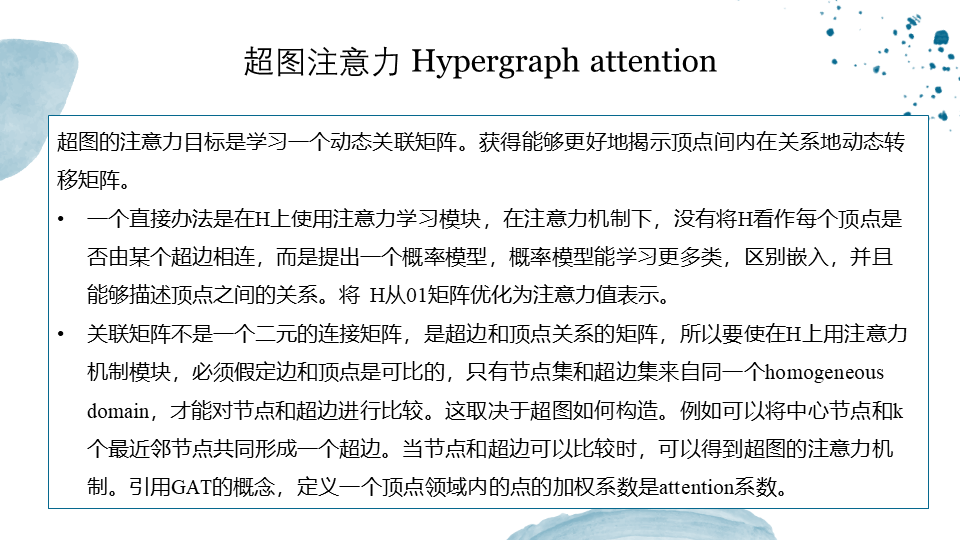

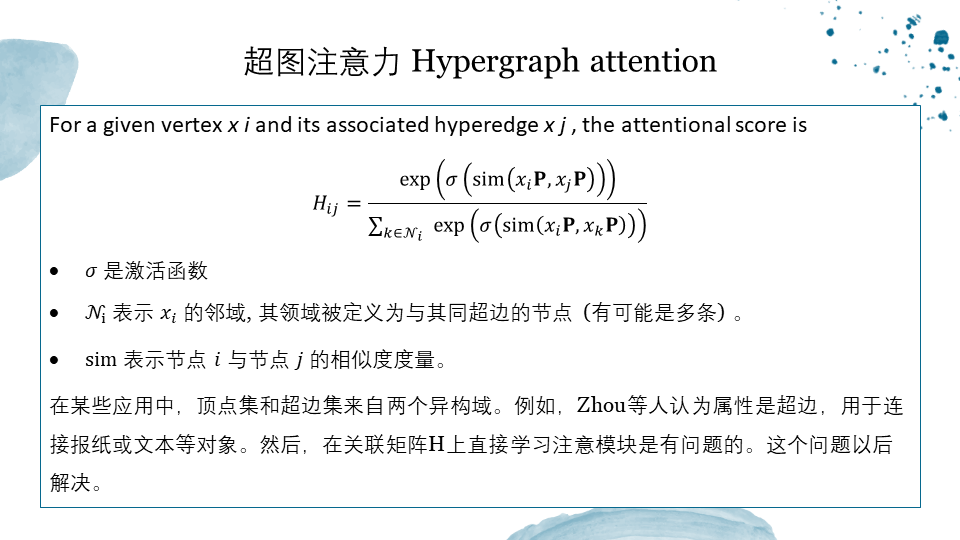

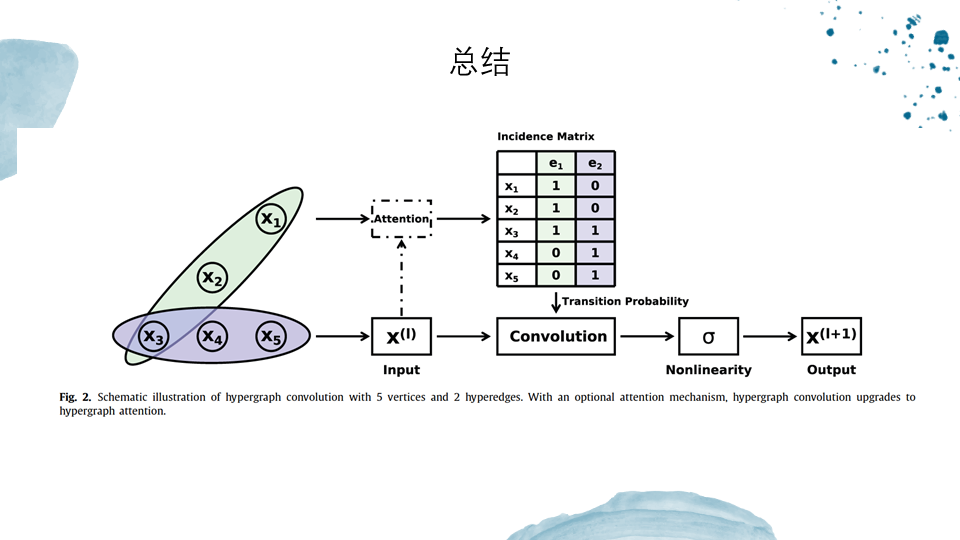

近年来,图神经网络在各个研究领域引起了广泛的关注并取得了显著的成绩。大多数算法都假设图中节点是成对出现的,即一条边只能连接两个节点。然而,在许多实际应用中,对象之间的关系是高阶的,超出了成对关系。为了有效地学习高阶图结构数据的深度嵌入,本篇论文在图神经网络中引入了两个端到端可训练的算子,即超图卷积和超图注意。超图卷积定义了在超图上执行卷积的基本公式,超图注意通过利用注意力模块进一步增强了学习的能力。有了这两个算子,图形神经网络很容易扩展为更灵活的模型,并应用于具有高阶关系的图上。半监督节点分类的实验结果证明了超图卷积和超图注意的有效性。

英文摘要:

Recently, graph neural networks haveattracted great attention and achieved prominent performance in variousresearch fields. Most of those algorithms have assumed pairwise relationshipsof objects of in- terest. However, in many real applications, the relationshipsbetween objects are in higher-order, beyond a pairwise formulation. To efficiently learn deep embeddings on the high-order graph-structureddata, we introduce two end-to-end trainable operators to the family of graphneural networks, i.e. , hypergraph convolution and hypergraph attention. Whilsthypergraph convolution defines the basicformulation of performing convolution on a hypergraph, hypergraph attentionfurther enhances the capacity of repre- sentation learning by leveraging anattention module. With the two operators, a graph neural network is readilyextended to a more flexible model and applied to diverse applicationswhere non-pairwise rela- tionships are observed. Extensive experimental resultswith semi-supervised node classification demon- stratethe effectiveness of hypergraph convolution and hypergraph attention.

原文链接:https://arxiv.org/abs/1901.08150

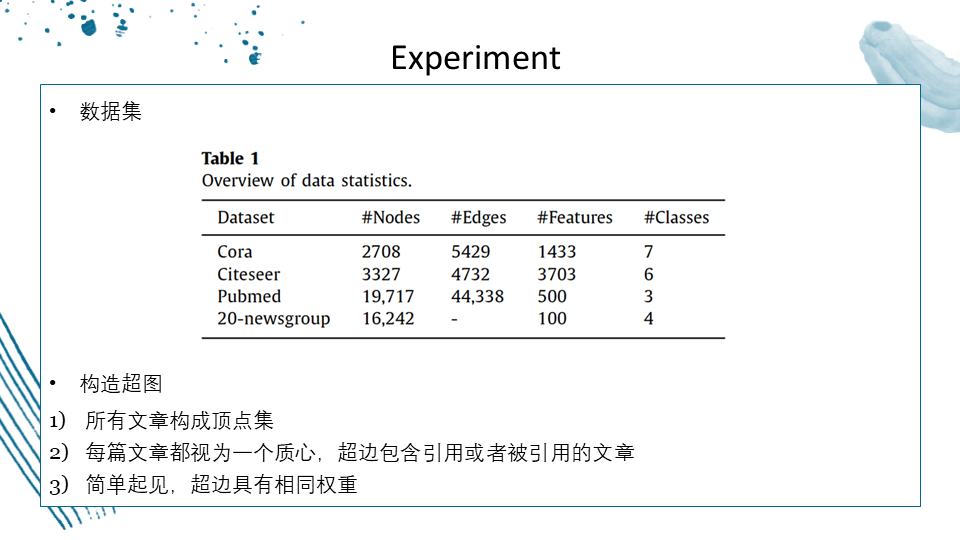

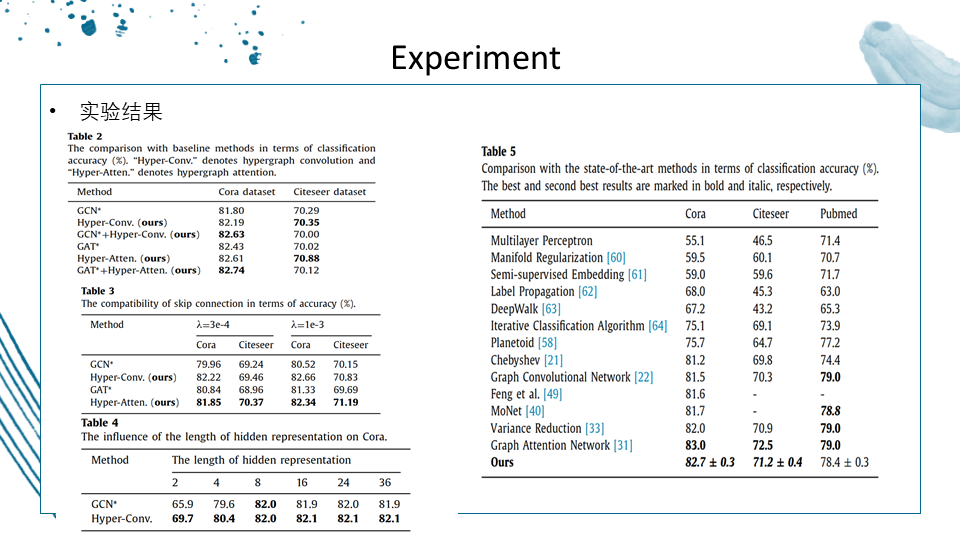

文献总结: